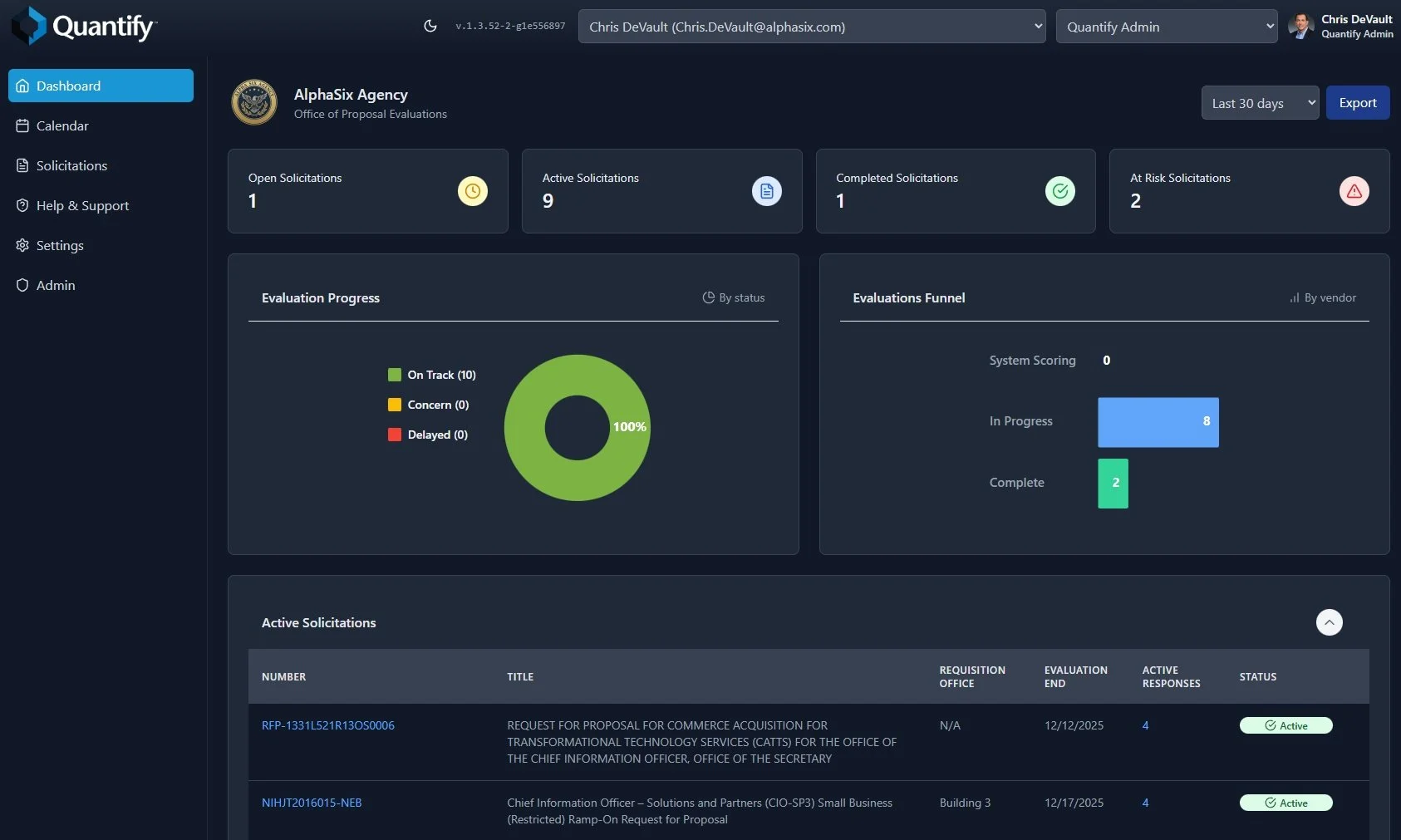

Complete AI Evaluation Support for Government

The most advanced proposal evaluation platform—built by former procurement officers and purpose-designed for source selection teams.

Evaluation Teams can save up to

90% of their time.

Leveraging our patent-pending technology with AI to extract evaluation criteria and match to offeror responses expedites the evaluation process in an unparalleled manner.

-

Quantify includes several, provisional technology and process patents that are included in our software. Full patent applications have been filed for each and are expected to be finalized in 2026.

LLM-Driven Real-Time RFI/RFP Scoring and Feedback System

Natural Language Interface for Elastic Cluster Management and Kibana Dashboard Automation

Anonymization and De-Anonymization of Sensitive Data for LLM Prompts

System and Method for Request for Proposal Value Scoring

Phase-Aware Dual-Output Conversational Structured Generation Architecture

-

Quantify is engineered as a federally compliant, on-premise or agency-controlled solution purpose-built for secure, AI-assisted evaluation of RFIs, RFPs, and other acquisition artifacts. Its architecture combines agentic AI, federated memory management, knowledge-graph–enhanced RAG, and hardened, FedRAMP-ready security controls to deliver the most advanced solicitation evaluation platform available to government evaluators today.

At the core of Quantify is a modular, microservices-based architecture optimized for high-assurance environments. Every architectural component—from the scoring engine to the document pipeline—was designed to operate inside federal networks, within agency-approved compute boundaries, and against agency-approved Large Language Models (LLMs). Quantify does not require data to leave the agency enclave, and the system is fully compatible with on-premise GPU systems, secure cloud environments, or hybrid deployments.

LLM Integration Layer

Quantify includes a flexible AI integration framework that supports any agency-approved LLM, including OpenAI, Anthropic, Grok, AWS Bedrock models, Azure Government AI, and fully air-gapped on-prem models running through Ollama or custom inference servers. This layer includes:

Automatic model-adapter selection

Token optimization and context management

Secure prompt sanitization and de-anonymization

Enclave-restricted inference pipelines

This ensures agencies maintain full control over model selection, model placement, data pathways, and inference security.

Patented Agentic Memory + Retrieval Framework

Quantify’s architecture incorporates AlphaSix’s patented hierarchical memory management system, enabling the platform to maintain evaluation context across multiple documents, factors, sections, and scoring cycles. The system blends:

Short-term, task-scoped memory for immediate scoring and commentary

Long-term institutional memory for evaluator consistency

A combined Vector Store + Knowledge Graph (RAG+KG) retrieval engine for contextual grounding

Immutable memory artifacts for compliance and reproducibility

This architecture gives Quantify a unique capability: evaluators receive consistent, defensible scoring recommendations across teams, sessions, and performance periods.

Evaluation Engine & Agentic Orchestration

The Quantify scoring engine is powered by our internal QuantumDrive Orchestration Framework, which uses autonomous agents to perform:

Requirement extraction

Section M factor/subfactor analysis

Compliance checks

Strengths, weaknesses, deficiencies generation

Evaluation Notice (EN) drafting

Automated traceability and rationale creation

Agents can dynamically generate new tools—via our ToolRegistry and dynamic tool-creation system—to analyze documents, compare proposals, or enforce agency-specific scoring rules. This gives Quantify the adaptability required for every unique procurement environment.

Document Processing & Structured Reasoning Pipeline

Quantify ingests and processes documents at scale, supporting:

PDFs, Word documents, spreadsheets, structured data, and scanned content

OCR with confidence scoring

Duplicate detection and hashing

Metadata extraction and lineage tracking

These documents are transformed into structured evaluation objects that feed directly into the agentic scoring process.

Scalable, High-Performance Infrastructure

Quantify’s microservices architecture allows it to scale horizontally to handle:

Large-volume solicitations

Multi-evaluator teams

Simultaneous scoring sessions

Multi-agency deployments

Its internal job scheduler and distributed compute paths prevent performance degradation and guarantee deterministic processing times even under heavy evaluator load.

Extensibility & Integration

Quantify is designed to be integrated into existing acquisition ecosystems including:

SharePoint and enterprise content repositories

Agency procurement systems

Workflow/orchestration platforms

Secure cloud or on-prem data lakes

Elastic clusters for analytics and auditing

Our adapter-based architecture makes Quantify interoperable without modifying agency infrastructure.

-

Quantify is engineered from the ground up as a federal-grade, zero-trust, on-premise or agency-controlled platform, purpose-built to safeguard sensitive acquisition data throughout the entire evaluation lifecycle. Every component—storage, transport, inference, memory, retrieval, agent orchestration, scoring logic, and auditability—is protected by multi-layered security controls designed to exceed the requirements of the Federal Acquisition Security Council (FASC), CISA, and agency-specific cybersecurity mandates.

Quantify never transmits data outside the customer’s secured environment and operates exclusively within agency-controlled infrastructure or approved cloud enclaves. This ensures that solicitation documents, proposal responses, evaluation notes, scoring rationales, and LLM-driven insights remain fully contained and protected.

Zero-Trust Security Architecture

Quantify implements a defense-in-depth model anchored in zero-trust principles:

No implicit trust between services or users

Every request is authenticated, authorized, validated, and logged.Service isolation through microservice boundaries

Limits lateral movement and enforces privilege separation.Strict policy enforcement on identities, agents, and tools

Agents cannot access or execute functions outside their designated scope.

Zero trust governs both human evaluators and AI agents, ensuring consistent enforcement of access and execution policies.

Comprehensive Encryption & Data Protection

Encryption Everywhere

All data is protected using industry-standard and government-recommended cryptographic practices:

Encryption at Rest: AES-256 or agency-specified FIPS 140-3 validated algorithms

Encryption in Transit: TLS 1.3+

In-Memory Sensitive Data Safeguards: ephemeral memory vaulting and immediate secure disposal

Document content, metadata, scoring results, and notes are encrypted at all stages of processing.

Anonymization Pipeline

Quantify includes an advanced, patented anonymization and de-anonymization engine that:

Removes or masks vendor-identifying information

Neutralizes evaluator bias

Ensures fairness and consistency in LLM-generated summaries and scoring

Evaluators can perform blind scoring while still maintaining full traceability in audit logs.

Identity, Access, & Role-Based Control

Quantify integrates seamlessly with federal identity providers, including:

PIV/CAC

Microsoft Entra ID / Active Directory

Okta

Custom SAML / OAuth2 providers

Role-Based Access Control (RBAC)

Fine-grained RBAC ensures:

Evaluators only see documents, factors, or sections assigned to them

Administrative users cannot modify evaluation outcomes

LLM agents are restricted to read-only or analytical scopes unless explicitly authorized

Export and download permissions are limited by role and solicitation phase

All permissions are defined in least-privilege fashion and enforced uniformly across the platform.

Agent Governance & ToolRegistry Security

Quantify uses a secure ToolRegistry and patented dynamic tool-creation system that prevents unauthorized or unsafe actions.

Security features include:

Tool-level authorization policies

Execution sandboxing for agent-generated tools

Code validation and safety checks before invocation

Bounded execution with monitoring and rollback

Memory-governed reasoning limits to avoid hallucination-driven actions

This prevents agents from ever taking unauthorized actions or accessing data they should not have visibility into.

FedRAMP-Ready & NIST-Aligned Controls

Quantify’s architecture aligns with:

FedRAMP Moderate/High baselines

NIST 800-53 Rev5

NIST 800-171

CISA Zero Trust Maturity Model

OMB M-22-09 & M-23-16 requirements

All system components are designed to operate within high-impact federal environments, including those involving procurement-sensitive or source-selection-sensitive information.

Immutable Audit Trails & Protest Defensibility

Every event in Quantify—human or AI—is recorded in a tamper-proof audit log, including:

Document uploads

Evaluator scoring actions

Factor/subfactor rationales

Agent outputs and tool usage

Revisions and overrides

Export activity and report generation

These logs enable:

GAO and Court of Federal Claims protest defense

IG investigations

Internal compliance auditing

Reproducible scoring workflows

Immutable audit trails preserve full traceability from source document to final award decision.

Operational Hardening

To protect agency workloads, Quantify includes:

Container isolation and runtime scanning

Real-time intrusion detection hooks

Automated vulnerability patching pipelines (agency-configurable)

Configurable data-retention and secure-deletion policies

Network segmentation for inference, storage, and API services

Additionally, Quantify supports offline or fully air-gapped deployments, ensuring no external dependencies or outbound communication.

No Vendor Lock-In, No External Data Exposure

Quantify ensures:

No external cloud connectivity is required

No proposal content leaves the agency enclave

No data is shared with LLM providers

Agencies retain full control of their models, data, memory, logs, and infrastructure

This ensures full compliance with federal procurement integrity laws, classification handling requirements, and agency governance policies.

Designed for High-Assurance, Mission-Critical Evaluations

Quantify’s security model is built not only to protect sensitive acquisition data—but to ensure the integrity, fairness, defensibility, and repeatability of the government evaluation process itself.

Its combination of:

zero-trust enforcement,

patented memory isolation layers,

secure AI agent governance, and

immutable auditability

All of these features makes it the most secure agentic evaluation platform available to Federal agencies.

-

Pricing is per user per year basis. Please contact our sales team for more details on pricing via the Get Started link below

-

Please contact our sales team for more details on how to buy via the Get Started link below.